Vibe Coding Works. Not the Way Most Executives Think It Does.

Vibe Coding Works. Not the Way Most Executives Think It Does.

I am not a developer. I have never written a line of production code in my life. And yet, for the last five weeks, I have been building real software — the Intelligence Age Scorecard, my paid AI readiness diagnostic, engineered to serve Fortune 500 executives and senior leaders. It runs on Cloudflare Workers, a D1 database, Stripe, and Claude API orchestration. I built it with Claude Code in VS Code. I did not hire engineers. I did not outsource to a development shop. I vibe coded it.

"Vibe coding," the term coined by Andrej Karpathy, describes a new mode of software creation: you describe what you want in natural language, an AI model writes the code, you iterate. It is the most over-hyped idea in technology today, and also one of the most consequential. Most executives I speak to have tried it once, got something that looked promising, watched it collapse the moment reality touched it, and quietly concluded that it was not yet ready.

They are wrong about the capability. They are right about the failure mode.

Here is what I have learned building a production system without writing code. It is not the blueprint the influencers will sell you. It is the one that survives contact with your own business.

How it started

In mid-March, I had an idea for a diagnostic tool that would sit between my keynote engagements and a broader product ladder, something that would give leaders a structured assessment of their organization's readiness for the Intelligence Age. I sketched it on paper. Then I opened Claude Code.

The first two weeks were euphoric. In three sessions I had a working prototype: Stripe checkout, an assessment engine, a basic report. I remember thinking: this is the leverage story. One person, a clear vision, and an AI doing the mechanical work.

Then came April 3. A single session, intended to add one feature, destroyed three weeks of work. The model had made six unrelated "improvements" while it was in the codebase. Database schemas drifted. A migration ran in the wrong order. Customer-facing pages went blank for hours. I had no tests to catch any of it.

That night, I did not blame the tool. I blamed myself. The model had done exactly what I asked it to do, with no constraints, no guardrails, and no explicit scope. It had optimized for helpfulness. I had optimized for speed. The collision was inevitable.

What did not work

Before the blueprint, the anti-pattern, because most vibe coding advice skips this, and the failures are where the lessons live.

Stacked changes in a single session.

"While you are in there, also fix X and Y." This is the single most common source of regression. Every change has to be atomic, or the blast radius compounds.

Letting the model self-answer its own review questions.

AI assistants default to forward motion. If you ask for a plan, they will present a plan and begin executing it in the same turn. Without explicit STOP-AND-WAIT instructions at every gate, you are not in the loop. You are watching a recording.

Hardcoding schema details into prompts.

I once had four prompts referencing a database column that no longer existed. Each prompt worked in isolation. Together they corrupted data in three places. The fix: one canonical file — `DATABASE.md` — that every prompt reads before touching the schema.

Trusting the merge.

`git merge --theirs` and `git merge --ours` are now banned from my workflow under any circumstance. Conflicts must be resolved by a human reading both sides, not by a model picking one.

Believing "it works" equals "it is safe."

A feature that passes a test is not the same as a feature that will not break the three features deployed around it. Regression is the silent killer of vibe-coded systems — the bug that ships when you stop looking.

What worked

That April 3 night I went looking for structure, and I found it in gstack, Garry Tan's engineering workflow for Claude Code. Garry runs Y Combinator an he describes gstack as "exactly my setup for agentic engineering."

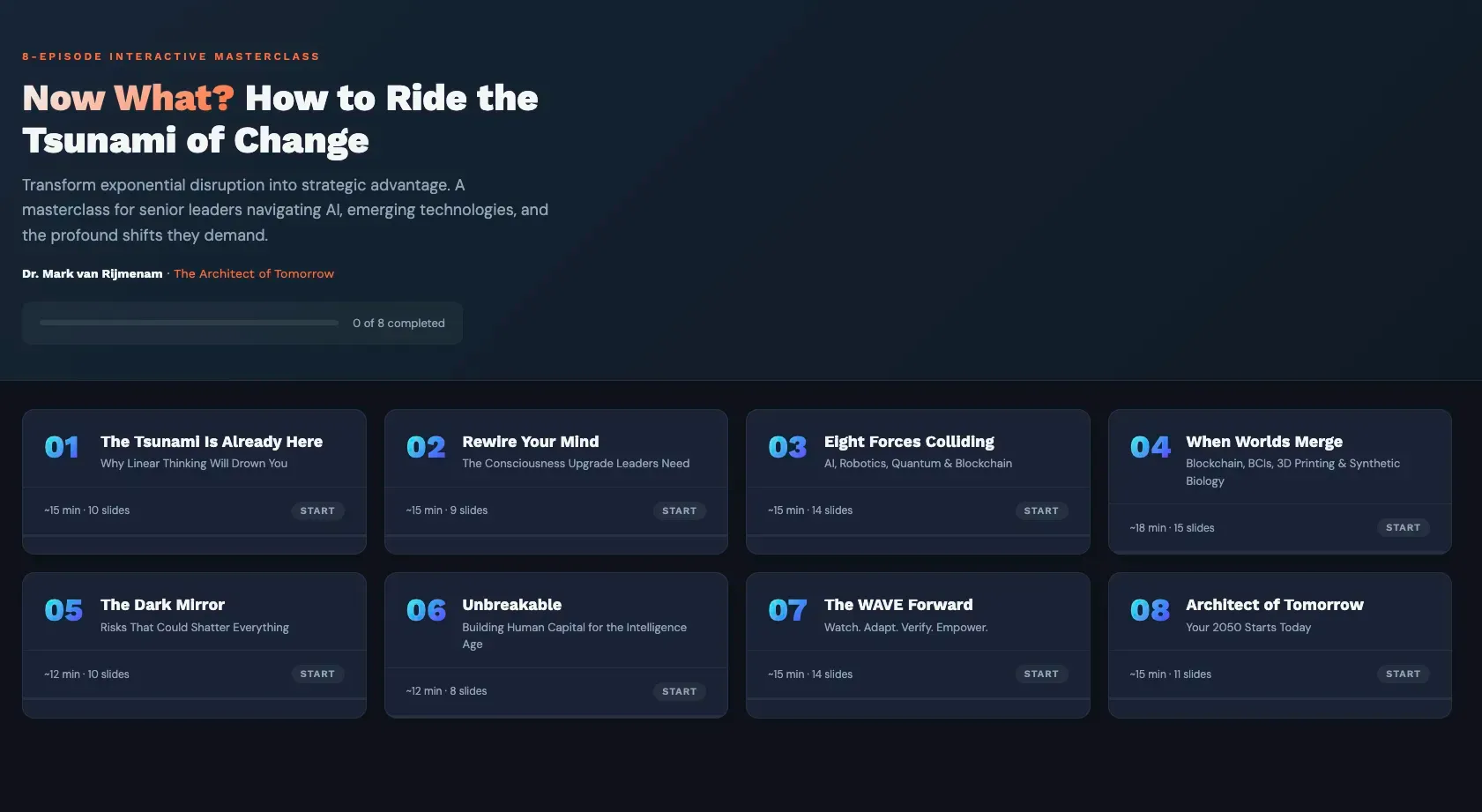

It translates the structured sprint cycle of real engineering organizations into slash commands: /office-hours to reframe the problem before writing code, /plan-eng-review to lock the architecture, /plan-ceo-review to challenge scope, and /review, /qa, /ship to audit, test, and commit.

I installed it into my repo, wrote a CLAUDE.md file that persists context across sessions, and stopped treating Claude Code like a search bar. Every working pattern that follows in this article sits on top of that scaffolding. I did not invent the discipline, I borrowed it from a professional. You do not need to reinvent software engineering to vibe code well. You need to stop skipping the parts of it that matter.

Every working pattern I now use emerged from a specific failure. None of it is theoretical.

One logical change per session. No changes outside scope.

When the model wants to tidy something adjacent, the answer is no. That tidy becomes the next session's prompt, with its own review and its own commit.

Two AI surfaces, two jobs.

Claude Code is not the only model in my workflow, and it is not the most important one. The architecture, the plans, the prompts, and the post-session analysis all live in a separate Claude chat.

The chat is the architect; Claude Code is the builder. Before any implementation session, I have already worked through the problem in chat, tradeoffs interrogated, schema decisions settled, prompt engineered. After the build, I paste Code's output, diffs, logs, screenshots, error traces, back into chat for review.

The second pair of AI eyes catches what the first missed: regressions the builder wanted to dismiss, assumptions it quietly made, edge cases it chose not to surface. You would not ask your framer to sign off on your load-bearing walls. Same logic applies here.

A three-gate cycle with mandatory human review.

Discovery first: the model investigates, presents findings, stops. Then engineering plan: exact SQL, exact code, presented, stopped. Then execution: the model builds, verifies, and only then commits. I answer every question at every gate. The model does not proceed without sign-off. This is slower than unconstrained generation. It also ships.

A regression guard file, updated every session.

Every behavior, UI decision, and architectural pattern that could be lost in a future merge is appended to a `REGRESSION-GUARD.md` file. Every subsequent session must verify each item is intact before committing. That single document has saved me more times than I can count.

A session workflow that is loaded before any work begins.

Every Claude Code session I open begins by reading three files: session workflow, coding standards, and a project-specific skill file. The rules are not negotiated mid-session. They are the first thing in context.

Database migrations before code deploys. Always.

A schema change that ships after the code depending on it takes the whole system down. I treat migration order as non-negotiable deployment discipline, not a preference.

Verification sessions that cannot write code.

After any significant build, I open a new session whose only job is to check the work. It is not allowed to modify anything. This separation prevents the most insidious bug in AI-assisted coding: the model fixing its own mistakes before you see them.

Session hand-offs before the model forgets.

Chat sessions have a useful length. Past some threshold, somewhere between two and four hours of dense back-and-forth, the model begins to drop context. It asks a question whose answer was settled earlier in the session. It contradicts a decision from an hour ago. It re-suggests an approach you already rejected.

The signal is subtle; the damage is not.

When I catch it, I stop and ask the model to write a session hand-off document: current state, decisions made, open questions, next scope, files touched. I open a fresh chat and paste the hand-off as the first message. Continuity preserved; context clean. This is how you run a five-week build with a tool that technically has no memory of last Tuesday.

The blueprint

For any executive considering vibe coding a real product, the minimum viable discipline:

1. Write down the rules of engagement before you write the first prompt. Session scope, gate structure, what the model cannot do without asking.

2. Maintain a single source of truth for anything shared across prompts — schemas, brand tokens, API contracts. Refer to it. Never duplicate it.

3. Use explicit STOP-AND-WAIT gates. Assume the model will default to forward motion; design against it.

4. Run two AI surfaces. One to think and analyze, one to build. Use the thinker to plan the work, write the prompt, and review the output.

5. Treat verification as a separate activity, in a separate session, with no write access.

6. Build a regression guard. Update it every session. Check it every session.

7. Commit small. Deploy smaller. One logical change at a time, every time.

8. Watch for context decay. When the model starts forgetting, write a session hand-off and open a fresh chat.

None of this is exciting. All of it compounds.

A peek at what is coming

The Intelligence Age Scorecard itself is the proof. It runs on a stack I do not personally maintain, orchestrated by a model I do not personally operate, built end-to-end by one non-developer and an AI, under the same discipline I have described here.

The individual assessment opens to the public this week, with a team version for enterprise immediately after. When you sit through it, you will be moving through a system whose entire history of failures is now encoded as the rules that protected its success.

Vibe coding is real. It is also a serious instrument. Treated with respect, it collapses the distance between an executive's idea and shipped software from months to weeks. Treated carelessly, it produces something that looks like software and behaves like a liability.

The leverage is in the judgment. As it always is.