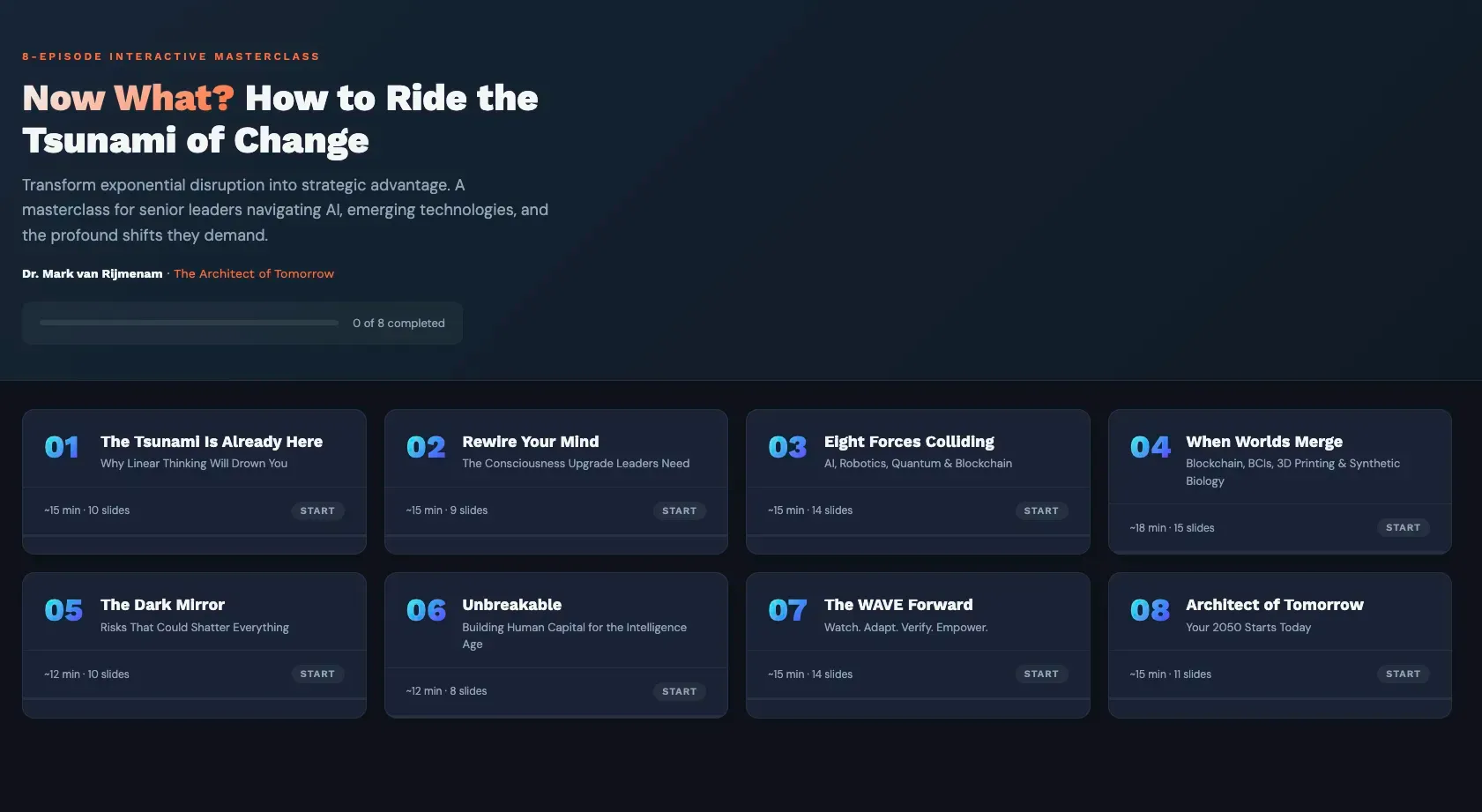

Exploring AI: Impact and Ethics of Large Language Models & Persuasive Bots

In the vast expanse of the digital universe, where information flows ceaselessly, and connectivity thrives, a new force has emerged, poised to reshape the very fabric of the online landscape. This force, known as Large Language Models (LLMs), is set to unleash a flood of transformation upon the internet, forever altering how we create, consume, and interact with content.

With the rapid advancements in artificial intelligence and natural language processing, LLMs have emerged as the pinnacle of human-like content generation. These sophisticated algorithms, powered by vast amounts of data and the ability to understand context and nuance, have already made their presence felt across various domains. From chatbots and virtual assistants to content creation and translation, LLMs have become the cornerstone of modern language technology, with, of course, ChatGPT at the forefront of it all.

In this article, we explore the LLM revolution, delving into its far-reaching implications for the internet as we know it. We will uncover the diverse applications of LLMs, examining how they are rewriting the rules of content creation, enhancing user experiences, and fueling innovation at an unprecedented scale.

But first, let's understand what makes LLMs a formidable force. These models are trained on massive datasets comprising vast collections of human-generated text from diverse sources, allowing them to learn patterns, context, and even the intricacies of grammar and style. With this immense capacity for understanding and generating human-like text, LLMs have the potential to redefine how information is presented and consumed on the internet.

Join me on this captivating journey as we navigate through the rising tide of LLMs and witness how they are set to reshape the digital landscape. From revolutionising content creation to personalised user experiences, from automated summarisation to effortless translation, the impact of LLMs on the internet is set to be profound.

Exploring the World of Language Models and Their Applications

LLMs, also known as Language Model-Based Learning Systems, represent state-of-the-art AI systems that have revolutionised natural language processing. Leveraging sophisticated deep learning architectures, LLMs have undergone extensive training on vast textual data, equipping them with the ability to produce human-like responses and comprehend the contextual intricacies of conversations.

LLMs possess impressive capabilities that empower them to engage users in dynamic and meaningful conversations. They excel at adapting their responses to individual users, offering personalised interactions and tailored recommendations. These systems find practical applications in customer service, where they can provide prompt and accurate support, resolving queries with efficiency.

In the realm of social media, LLMs have transformed influencer marketing. These persuasive bots promote authentic and fascinating interactions with followers by imitating the writing style and personality of certain people. This opens up new possibilities for content creation, community development, and brand promotion, but of course, it also opens a Pandora's Box of problems, as we will see.

LLMs have proven their worth in content generation tasks. Whether crafting news articles, blog posts, or marketing materials, LLMs automate the process while maintaining a high standard of quality and coherence.

However, the impact of LLMs extends beyond customer service, influencer marketing, and content creation. These language models hold promise in fields like virtual assistants, educational tools, and even psychological therapy, providing personalised support and guidance in various domains.

As LLMs advance, their applications and capabilities are set to expand, presenting exciting opportunities alongside significant ethical challenges. Understanding the potential of LLMs and their influence on our digital interactions is essential as we navigate the ever-evolving landscape of artificial intelligence.

From Language Evolution to Digital Dominance: The Rise of LLMs

LLMs have experienced a remarkable surge in recent years, driven by significant technological advancements. These sophisticated language models, exemplified by models like GPT-3 and GPT-4, Bard or Claude, have demonstrated extraordinary language generation capabilities and the ability to replicate human-like interactions.

One notable area where LLMs have excelled is in customer service and support. These systems provide personalised support, quick replies, and enhanced client experiences by using their considerable knowledge and linguistic skills. LLMs have also made significant contributions as virtual assistants, aiding users in various tasks and managing information efficiently. In addition, their content generation capabilities have streamlined the creation of engaging and high-quality content.

What sets the current rise of LLMs apart is the increased accessibility they offer. Developers and businesses can easily integrate LLM capabilities into their own applications and services through cloud-based platforms and APIs. This accessibility democratises LLM technology, enabling a broader range of users to harness the power of advanced language processing and driving innovation across different fields.

What is even more fascinating is how LLMs are democratising content creation. They've levelled the playing field by empowering individuals and organisations to generate high-quality content more efficiently. Overcoming writer's block becomes more manageable, and even creating engaging blog posts or entire books becomes feasible for a broader audience. This accessibility fosters a more inclusive environment for content creation, amplifying diverse voices and perspectives.

While tools like ChatGPT provide opportunities for overcoming writer's block and facilitating content creation, they also pose challenges related to the potential flood of bot-generated content on the internet. The ease of generating text using AI models can contribute to an already existing problem of information overload. The sheer volume of content generated by bots can make it difficult for users to identify trustworthy and reliable information. Implementing effective measures for content curation, fact-checking, and ensuring the authenticity of sources becomes essential. Striking a balance between the accessibility and benefits of AI-generated content and the need for quality control is crucial to mitigate the challenge of information overload. Through AI and human editorial oversight, it is possible to maintain a healthy information ecosystem that amplifies diverse voices while ensuring the integrity and credibility of online content.

With strong language generation skills, applications in customer service, virtual assistance, content creation, and increased availability to the general public, LLMs are poised to play an integral role in shaping the future of digital communication.

The Potential of LLMs to Empower Persuasive Communication

Language models like LLMs can be persuasive due to their extensive training in vast amounts of text data. They have a strong grasp of language patterns, allowing them to generate coherent and grammatically correct responses. LLMs also excel at understanding context and can tailor their output to match the given prompt, making their responses appear intelligent and informed. Also, they have the ability to generate engaging and appealing content, drawing from a wealth of information to provide detailed explanations or compelling arguments.

Language has long been recognised as a potent tool for persuasion, shaping our thoughts, beliefs, and actions. In the digital era, where text-based communication dominates, the persuasive power of language takes on a new dimension. LLMs, with their advanced language processing capabilities, have the potential to revolutionise persuasive communication on the internet.

At the heart of persuasive communication lies the ability to engage and convince the audience. LLMs shine in this area by leveraging their understanding of context, nuances, and even emotions. Having been trained on extensive data, these language models can generate responses that resonate with users, fostering emotional connections and capturing attention.

Aside from that, LLMs possess the impressive capability to adapt and personalise their responses for individual users. These systems may adjust their persuasive strategies by assessing user inputs and contextual data, making the dialogue feel more pertinent and relatable. Whether it is adjusting tone, incorporating personalisation, or crafting compelling narratives, LLMs have the potential to create highly persuasive and captivating interactions.

The implications of LLMs' persuasive capabilities are far-reaching. From marketing and advertising to political discourse and public opinion, LLMs can actively shape narratives, influence perceptions, and guide decision-making processes.

However, the ethical considerations surrounding the use of persuasive LLMs are significant. When interacting with LLMs, transparency and disclosure is essential to preventing deception or manipulation. Establishing ethical guidelines for the responsible deployment of persuasive LLMs is crucial to maintaining trust and upholding the integrity of online interactions, which is an issue we will discuss later in this article.

As LLMs continue to advance, their potential for persuasive communication will grow. Recognising the power of language and understanding how LLMs can adapt and personalise their responses opens up exciting possibilities in marketing, advocacy, and public discourse. However, it is vital to approach the use of persuasive LLMs with thoughtfulness and ethical considerations to navigate the complex landscape of influence and persuasion in the digital realm.

Exemplary LLMs: Pioneers Shaping the Future of Language Generation

Among the wide array of LLMs, several have garnered significant attention and achieved remarkable success. These models have pushed the boundaries of language generation and significantly impacted their respective domains. Here are a few notable examples:

1. GPT-4 (Generative Pre-trained Transformer 4)

The latest iteration in OpenAI's series of language model systems is GPT-4. Its previous version, GPT 3.5, powered the widely popular ChatGPT chatbot launched in November 2022, which I used to write my fifth book, Future Visions. With its emphasis on multimodality, GPT-4 can process inputs and produce human-like text as output. This model's expanded capabilities enable tasks such as uploading a worksheet for scanning and generating responses to questions or analysing uploaded graphs to perform calculations based on the presented data. According to OpenAI, GPT-4 demonstrates a significant improvement over GPT-3.5. It shows an 82% decrease in the likelihood of generating responses that contain disallowed content when prompted. In addition, GPT-4 exhibits a 40% increase in generating factual responses compared to its predecessor. These improvements highlight the advancements made in ensuring content adherence and factual accuracy within the GPT-4 model.

2. BERT (Bidirectional Encoder Representations from Transformers)

BERT, developed by Google, has revolutionised natural language processing tasks, particularly in understanding context and semantics. By pre-training on a large corpus of text, BERT has achieved remarkable success in tasks such as sentiment analysis, named entity recognition, and question-answering. Its effectiveness in understanding the intricacies of language has made it a go-to model for various NLP applications.

3. T5 (Text-to-Text Transfer Transformer)

T5, developed by Google Research, is a versatile LLM known for performing a wide range of natural language processing tasks. By framing different tasks as text-to-text problems, T5 can generate outputs for tasks like translation, summarisation, question-answering, and text classification. This adaptability and flexibility have made T5 highly successful in the NLP community.

4. BARD

Developed by Google, it is a groundbreaking language model that has garnered significant attention in the field. With its advanced training techniques and impressive parameter count, BARD excels in generating coherent and contextually rich text. It is particularly well-suited for story writing and creative content generation tasks. BARD's exceptional capabilities allow it to produce highly human-like and imaginative narratives, pushing the boundaries of what language models can achieve.

5. Anthropic's Claude

It has been designed to understand and generate text that captures human intentions, beliefs, and values. With the combination of deep learning techniques with a focus on interpretability and explainability, Claude aims to create AI systems that align with human preferences. Its unique approach prioritises the development of transparent, reliable, and collaboratively capable AI systems. Anthropic's Claude seeks to bridge the gap between human language and machine learning models, fostering responsible and beneficial AI development.

6. Hugging Face

An American organisation that has developed a popular open-source library called Transformers. This library provides a wide range of pre-trained models, including various state-of-the-art language models like GPT, BERT, and RoBERTa. It offers a user-friendly interface for implementing and fine-tuning these models, making it easier for researchers and developers to leverage the power of these models in their natural language processing tasks. Hugging Face's Transformers library has gained significant popularity within the NLP community, offering a valuable resource for building and deploying advanced language models.

7. ALPaCA

An open-source language model developed by researchers at Stanford University. It focuses on generating coherent and contextually appropriate text by employing adaptive pattern completion techniques. ALPaCA aims to enhance the quality and coherence of generated language by considering the surrounding context and adapting its pattern completion accordingly. The model leverages advanced algorithms and techniques to generate text that maintains contextual relevance and coherence. ALPaCA is a valuable tool for researchers and developers working on natural language processing tasks, offering an open-source solution for generating high-quality text based on adaptive pattern completion methods.

These successful LLMs have propelled the field of natural language processing and opened up new possibilities for human-machine interaction and content generation. They have set new benchmarks for language generation capabilities and continue to inspire further research and advancements in the field. With their ability to push the boundaries of what is possible with language models, these models have made a significant impact and continue to shape the landscape of AI-powered language processing.

How LLMs and Persuasive Bots Benefit Organisations

Language models and persuasive bots powered by LLMs hold immense potential for organisations across various industries. As these technologies continue to evolve, they offer exciting opportunities to enhance customer engagement, streamline operations, and drive business growth. Here, we explore the future possibilities, and the benefits organisations can derive from LLMs and persuasive bots.

Efficient Customer Support

Organisations can utilise persuasive bots to augment their customer support operations. LLMs can assist in automating responses to frequently asked questions, resolving common issues, and escalating complex queries to human agents when necessary. In addition to relieving the customer support team from repetitive tasks, organisations can free up resources and focus on more critical and specialised customer service areas.

Personalised Marketing Campaigns

LLMs have the potential to revolutionise marketing by enabling organisations to generate highly targeted and persuasive content. LLMs can craft personalised marketing messages, advertisements, and recommendations based on customer data and preferences. This level of personalisation can significantly improve customer engagement, increase conversion rates, and enhance overall marketing effectiveness.

Efficient Content Creation

LLMs can streamline content creation processes for organisations. They can assist in generating blog posts, articles, product descriptions, and social media content. With minimal human intervention, organisations can leverage LLMs to produce high-quality, engaging content at scale, saving time and resources while maintaining consistency and relevance.

Data Analysis and Insights

LLMs can be utilised to analyse and derive insights from vast amounts of textual data, such as customer reviews, social media posts, and market research. By understanding patterns, sentiment, and emerging trends, organisations can make informed decisions, improve products and services, and optimise their business strategies.

Virtual Sales Assistants

LLM-powered persuasive bots can act as virtual sales assistants, guiding customers through the purchase journey, making personalised recommendations, and answering product-related queries. These bots can help organisations drive sales, upsell and cross-sell products, and provide an interactive shopping experience, even in online and e-commerce settings.

Language Localisation and Translation

LLMs can aid organisations in overcoming language barriers by providing accurate and contextually relevant language localisation and translation services. This can open up new markets and enable organisations to communicate effectively with a global customer base.

It is worth noting that while the benefits of LLMs and persuasive bots are significant, organisations must also be mindful of ethical considerations. When implementing these technologies, transparency, fairness, and user privacy should be prioritised.

In summary, the future of LLMs and persuasive bots holds great promise for organisations. By harnessing these technologies' power, organisations can elevate customer experiences, optimise operations, and gain a competitive edge in an increasingly digital world. Embracing the potential of LLMs and persuasive bots will empower organisations to thrive and succeed in the evolving business landscape.

Navigating Challenges and Limitations: Unveiling the Realities of LLMs

Despite their remarkable capabilities, LLMs come with challenges and limitations that necessitate careful consideration. Understanding these aspects is crucial for gaining a comprehensive perspective on the implications of LLMs in the online landscape. Here are some key insights to keep in mind:

There is a concern about the possibility of biases and misinformation in LLM-generated content. Since LLMs learn from training data, biases present in the data can inadvertently influence the content they generate. To maintain fairness and accuracy, ongoing efforts are necessary to identify and mitigate these biases.

Contextual understanding poses another significant hurdle. While LLMs excel at generating coherent text, they can encounter difficulties in accurately interpreting and comprehending context. LLMs are not infallible and can produce incorrect or nonsensical responses, particularly when faced with complex or ambiguous queries. Continuous improvement and refinement of LLM training processes and algorithms are crucial to enhancing their accuracy and reliability. As users, it is important to exercise critical thinking when consuming content generated by LLMs, considering the potential for inaccuracies or misleading information.

Balancing automation with human oversight and intervention is another key challenge.

While LLMs offer efficiency and scalability, they lack human qualities such as empathy, ethical judgment, and critical thinking. Human involvement becomes essential to respect ethical boundaries and address specific cases where LLM-generated content may be inappropriate or harmful. Responsible use of LLMs, accompanied by robust safeguards and fact-checking mechanisms, is essential to combat these challenges and maintain the integrity of information online.

Interpretability and explainability are ongoing areas of research in the field of LLMs. Understanding the reasoning behind LLM outputs, especially when they operate as "black boxes," is a significant challenge. Advancements in interpretability techniques and model transparency are vital to foster trust and comprehension of LLM-generated content.

The computational resources required for training and deploying LLMs pose practical and environmental considerations. Training larger LLMs demands substantial computational power and energy consumption. Optimising the efficiency of LLM models and minimising their carbon footprint is imperative to mitigate their environmental impact.

Recognising and addressing these challenges requires ongoing research, collaboration, and the establishment of guidelines for responsible LLM deployment. It is vital to actively work towards transparency, fairness, and continuous improvement to fully leverage the benefits of LLMs in persuasive communication while minimising their limitations and potential risks.

The Dark Side of LLMs: Fueling the Post-Truth Era

While LLMs hold immense potential in various domains, it is crucial to acknowledge the negative aspects associated with these technologies. The main risk no one is really talking about is that LLMs could contribute to a post-truth world, where the internet becomes flooded with convincing yet incorrect or misleading content.

LLMs' unprecedented language generation capabilities can be exploited to create highly persuasive and believable narratives that lack factual accuracy. These models have the potential to generate misleading information, deceptive news articles, and even deep fake-like content, blurring the line between reality and fiction.

LLMs can amplify confirmation bias and echo chambers by tailoring content to individual preferences. They can reinforce existing beliefs or manipulate opinions by generating content that aligns with preconceived notions, thereby exacerbating societal divisions and inhibiting critical thinking.

The rapid spread of misinformation facilitated by LLMs can have serious consequences. It can erode trust in reliable sources of information, undermine democratic processes, and fuel social unrest. The ability of LLMs to create vast amounts of content quickly poses a challenge for fact-checking and verification processes, making it harder to distinguish truth from falsehood.

To address these concerns, researchers and developers are actively exploring methods to improve LLMs' robustness, transparency, and accountability. Techniques like explainable AI, bias detection, and fact-checking integration are being developed to mitigate the risks associated with misinformation and deceptive content generation.

Sometimes, LLMs can produce plausible-sounding outputs but not necessarily grounded in reality; this leads to an issue called "hallucination" in the AI space. This can lead to the generation of false or misleading information if not properly guided or verified.

It is important to note that advancements in AI research have focused on improving the accuracy and reliability of language models. OpenAI and other organisations continually work to address issues related to the generation of inaccurate or misleading content by implementing techniques like prompt engineering, human review, and user feedback loops. These measures aim to enhance the quality and trustworthiness of AI-generated text.

Fake Cases in a Law Suit

In recent news, a concerning situation unfolded when a lawyer representing a man who sued an airline turned to ChatGPT for assistance in preparing a court filing. Unfortunately, the outcome was far from satisfactory. The AI hallucination, in this case, involving ChatGPT, was noticed when Avianca's lawyers approached the judge, Kevin Castel of the Southern District of New York, stating that they couldn't find the court cases cited in the brief prepared by Mata's lawyer, Steven A. Schwartz, using ChatGPT.

The fabricated cases mentioned in the brief did not exist in legal databases. Avianca's lawyer, Bart Banino, became suspicious when they couldn't recognise any of the mentioned cases. At this point, the unreliability of ChatGPT's content and the possibility of it generating false information became apparent. Schwartz, in response, acknowledged that he had consulted ChatGPT to supplement his legal research but had been unaware of the AI's potential for providing inaccurate information.

Indeed, this incident involving ChatGPT's AI hallucination serves as a reminder of the broader implications beyond legal proceedings. It highlights the potential risks and limitations of relying solely on AI language models in various human situations. While AI tools can be valuable resources, this incident emphasises the importance of human expertise and judgment, especially when dealing with critical tasks that require nuance, accuracy, and reliability.

Also, the potential misuse of LLMs for malicious purposes is a significant ethical concern. LLM-generated content could be used to spread disinformation, manipulate public opinion, or deceive individuals. Safeguards must be in place to prevent the unethical use of LLMs and the dissemination of harmful or misleading information.

Moreover, promoting media literacy and critical thinking skills among users is essential in navigating the information landscape effectively. Educating individuals on recognising and evaluating reliable sources of information can help build resilience against the influence of false narratives generated by LLMs.

As the capabilities of LLMs continue to advance, it is vital to strike a balance between the benefits and risks they pose. Responsible development, ethical guidelines, and proactive measures to combat misinformation are crucial to harnessing the positive potential of LLMs while mitigating their negative impacts on truth and information integrity.

While LLMs offer powerful language generation capabilities, they also raise concerns about the proliferation of convincing yet inaccurate content. It is crucial to understand and address these challenges, so we can work towards harnessing LLMs responsibly and ensuring that the internet remains a reliable source of truthful and useful information.

Responsible Implementation: Ethical Considerations for LLMs and Persuasive Bots

The rise of LLM-generated persuasive bots raises important ethical considerations that cannot be overlooked. As these language models become more advanced in their persuasive capabilities, addressing the implications and potential misuse of LLMs in persuasive communication is crucial.

The concern revolves around the potential manipulation of individuals through LLM-generated content. The persuasive nature of LLMs, coupled with their ability to adapt and personalise responses, raises questions about informed consent and the potential for undue influence. It is essential to ensure that users are aware when interacting with LLMs and have the necessary information to make informed decisions.

Privacy is another ethical consideration. LLMs collect and analyse vast amounts of user data to personalise their responses and enhance their persuasive abilities. Safeguarding user privacy and ensuring the responsible handling of data is essential to maintaining trust and protecting individuals' sensitive information.

Tools like ChatGPT can present challenges for companies when inputting their data into the system. There is a concern that proprietary data could end up in the hands of organisations like OpenAI, raising issues of data privacy and intellectual property protection. In such cases, open-source alternatives can offer a potential solution. Open-source models provide transparency and allow companies to deploy and customise them on their own infrastructure, reducing the risk of sharing sensitive data with third parties. With open-source alternatives, companies can have more control over their data while still benefiting from the power of natural language processing models. This approach addresses the need for privacy and security while leveraging advanced AI capabilities.

Transparency and accountability are crucial in the ethical deployment of LLMs. Users should be informed when they are interacting with LLMs and understand the limitations of these systems. Clear guidelines and regulations should be established to govern the use of LLMs in persuasive communication, ensuring transparency, fairness, and the protection of individuals' rights.

Addressing these ethical considerations requires collaboration among AI developers, policymakers, and society as a whole. Establishing ethical frameworks, guidelines, and regulations will help navigate the potential risks associated with LLM-generated persuasive bots and ensure their responsible and ethical use. We can fully use LLMs while upholding ethical standards, safeguarding user rights, and fostering trust in the developing field of persuasive communication powered by AI by actively taking into account and addressing these ethical concerns.

Pioneering the Future: The Evolution of LLMs and the Promising Outlook for Persuasive Bots

As LLMs progress, their role in online communication and the prevalence of persuasive bots are set to undergo significant changes. Looking ahead, several key trends and developments will shape the future landscape of LLMs and their impact on various industries and society at large.

The increased integration of LLMs with other technologies, like chatbots and voice assistants, is likely. This integration will enable more seamless and natural interactions, amplifying the persuasive capabilities of these systems. Chatbots powered by LLMs can engage users in captivating conversations, while voice assistants can deliver persuasive messages with a human-like tone, transforming how we interact with technology.

The widespread use of persuasive bots has societal implications. As individuals increasingly engage with AI-driven systems, trust, transparency, and accountability questions become crucial. Clearly, labelling LLM-generated content and differentiating it from human-generated content is essential to maintaining transparency and enabling users to make informed choices. As previously mentioned, establishing ethical guidelines and regulations is necessary to prevent the misuse of persuasive bots and safeguard against undue influence or manipulation.

While the potential of LLMs and persuasive bots is promising, ethical considerations and responsible development are of utmost importance. Collaboration between AI researchers, industry professionals, policymakers, and ethicists is necessary to shape the future of LLMs in a manner that aligns with societal values and preserves user autonomy.

As LLMs and persuasive bots continue to evolve, their impact on online communication and various industries will be profound. Striking the right balance between persuasive capabilities and ethical safeguards is crucial to harnessing the potential of LLMs while ensuring their responsible and beneficial integration into our digital landscape.

We can create a future where persuasive bots are used ethically, adding value to people, organisations, and society by anticipating LLMs' future trajectory and actively participating in ethical discussions.

Final Thoughts

We find ourselves at the crossroads of innovation and responsibility as we explore the fascinating world of LLMs flooding the internet with engaging, convincing, and highly persuasive bots. The implications of this technological advancement are both awe-inspiring and thought-provoking, prompting us to reflect on the future of communication in the digital age.

The rise of LLMs and persuasive bots presents opportunities for various industries and sectors. Marketing can become more personalised and impactful, political campaigns can reach a wider audience, and customer service can offer tailored solutions. However, as we embrace these advancements, we must remain vigilant about potential biases, misinformation, and privacy concerns. Responsible development, ongoing research, and collaboration across disciplines are key to navigating these challenges and shaping a future where LLMs enhance our lives while upholding ethical standards.

The journey ahead will require ongoing dialogue, transparency, and adaptability. It is not solely the responsibility of developers, policymakers, or technology experts; it is a collective effort involving all stakeholders. If we encourage an inclusive conversation that considers diverse perspectives, we can ensure that the benefits of LLMs and persuasive bots are maximised while safeguarding the well-being and autonomy of individuals.

As we embark on this transformative path, let us embrace the possibilities, celebrate the potential for positive change, and hold steadfast to our shared values. The future of online communication rests in our hands, and by harnessing the power of LLMs responsibly, we can create a digital landscape that empowers, informs, and engages while preserving the essence of human connection.1.